Team Building

In-person, virtual, or hybrid adventure to excite your team

Gamification » What To Look For In A Gamification Engine Before You Buy

What to Look for in a Gamification Engine Before You Buy

What a gamification engine actually does

A gamification engine is not a bag of points and badges. It’s a rules system that listens for user actions, evaluates logic, updates state, and returns timely feedback that nudges behavior. Think of it as a compact behavior-change service: events in, decisions out.

In practice, an engine usually handles:

- Event ingestion. Capture actions from apps, web, QR scans, GPS check-ins, NFC taps, kiosks, or admin triggers.

- Rule evaluation. Translate events into outcomes using conditions, segments, cooldowns, streak logic, and progression thresholds.

- State updates. Persist points, levels, badges, quests, streaks, and inventory. Maintain anti-abuse flags and audit logs.

- Feedback. Push in-app toasts, emails, SMS, push notifications, or on-screen progress to close the loop fast.

- Economy. Track earnable and spendable currencies, reward catalogs, throttles, and budgets.

- Analytics. Report participation, funnel drop-offs, challenge completion rates, and leaderboards.

- Ops tooling. Give non-developers a console for configuring challenges, targeting, rewards, and content without a deploy.

A pattern we keep seeing: the best engines make it trivial to try a new rule, constrain its blast radius, and measure impact without code freezes. That’s where meaningfully better engagement shows up.

At a Glance

- Real-time rule evaluation and fast feedback correlate most with sustained participation.

- Engines that support experimentation, segmentation, and anti-abuse controls outperform cosmetic “PBL” add-ons.

- Security, privacy, and export-friendly data models are table stakes, not nice-to-haves.

- Build when it is core differentiation and you can staff it for years; otherwise buy or embed.

Evidence: what reliably moves behavior (and what doesn’t)

The research is clear enough to guide buying decisions. Meta-analyses find positive, but context-dependent, effects for gamification, with stronger outcomes when design elements align with goals and social context rather than generic points-badges-leaderboards. In education, for example, a 2020 meta-analysis reported significant effects on cognitive outcomes and noted that elements like collaboration and meaningful narrative matter more than raw points. (link.springer.com)

Two reliable design anchors show up repeatedly:

- Self-Determination Theory alignment. Experiences that support autonomy, competence, and relatedness tend to sustain motivation longer than purely extrinsic rewards. Engines that can express mastery paths, choices, and team mechanics have an advantage. (pmc.ncbi.nlm.nih.gov)

- Immediate, contingent feedback. Behavior science shows that contingent reinforcement and timely signals increase response rates. Variable ratio schedules, used thoughtfully, drive high, steady participation, but require ethical guardrails. (pmc.ncbi.nlm.nih.gov)

On the flip side, leaderboards without segmentation, runaway reward economies, and one-size-fits-all point grants typically deliver a short spike, then decay. Engines that make segmentation, fairness controls, and economy tuning easy help you avoid that arc.

The features that matter (and why)

Below are the capabilities that separate engines you can scale with from engines you’ll outgrow.

- Rules engine with real-time evaluation. Look for condition builders, cooldowns, streak logic, time windows, and priority resolution. Real-time responses reinforce behavior better than delayed batch updates.

- Challenge and quest modeling. Missions with multi-step logic, prerequisites, and optional paths support mastery and autonomy. Tie challenge completion to narrative, not just points.

- Progression systems. Levels, tiers, and prestige cycles keep advanced users engaged without crushing newcomers. Soft caps and catch-up mechanics prevent stratification.

- Leaderboards that don’t invite abuse. Insist on server-validated submissions, time-bounded boards, and anti-cheat checks. Authoritative leaderboards, where the server writes final results, are a practical baseline. (heroiclabs.com)

- Economy and rewards. Dual-currency designs, limited-time boosts, and budget controls help you pace rewards. Inventory and redemption workflows should be auditable.

- Segmentation and targeting. Rules should target by cohort, behavior, or context. You need knobs to shape experiences for first-timers, veterans, and lapsed participants.

- Experimentation. Built-in A/B test hooks or clean integration with your experimentation platform. Guardrail metrics and exposure logs are non-negotiable if you plan to optimize. Microsoft’s long-running work on trustworthy online experiments is a useful benchmark for what “good” looks like. (researchgate.net)

- APIs, SDKs, and webhooks. Modern engines expose REST/GraphQL, mobile SDKs, and webhooks with retries. Prefer idempotent writes and signed webhooks to handle retries safely. Stripe’s guidance on idempotency and webhook delivery is a solid reference point. (stripe.com)

- Admin and moderation. Non-technical users need safe editing, preview, staged publishing, and rollback. Moderation queues and profanity filters keep UGC challenges defensible.

- Analytics and export. Event streams to your warehouse, explorer-grade dashboards, and raw exports. You will outgrow black-box charts.

- Access and auth. SSO, SAML, and role-based permissions so security teams do not block you later.

- Localization and accessibility. Multi-language content, right-to-left support, and WCAG-friendly interfaces signal maturity.

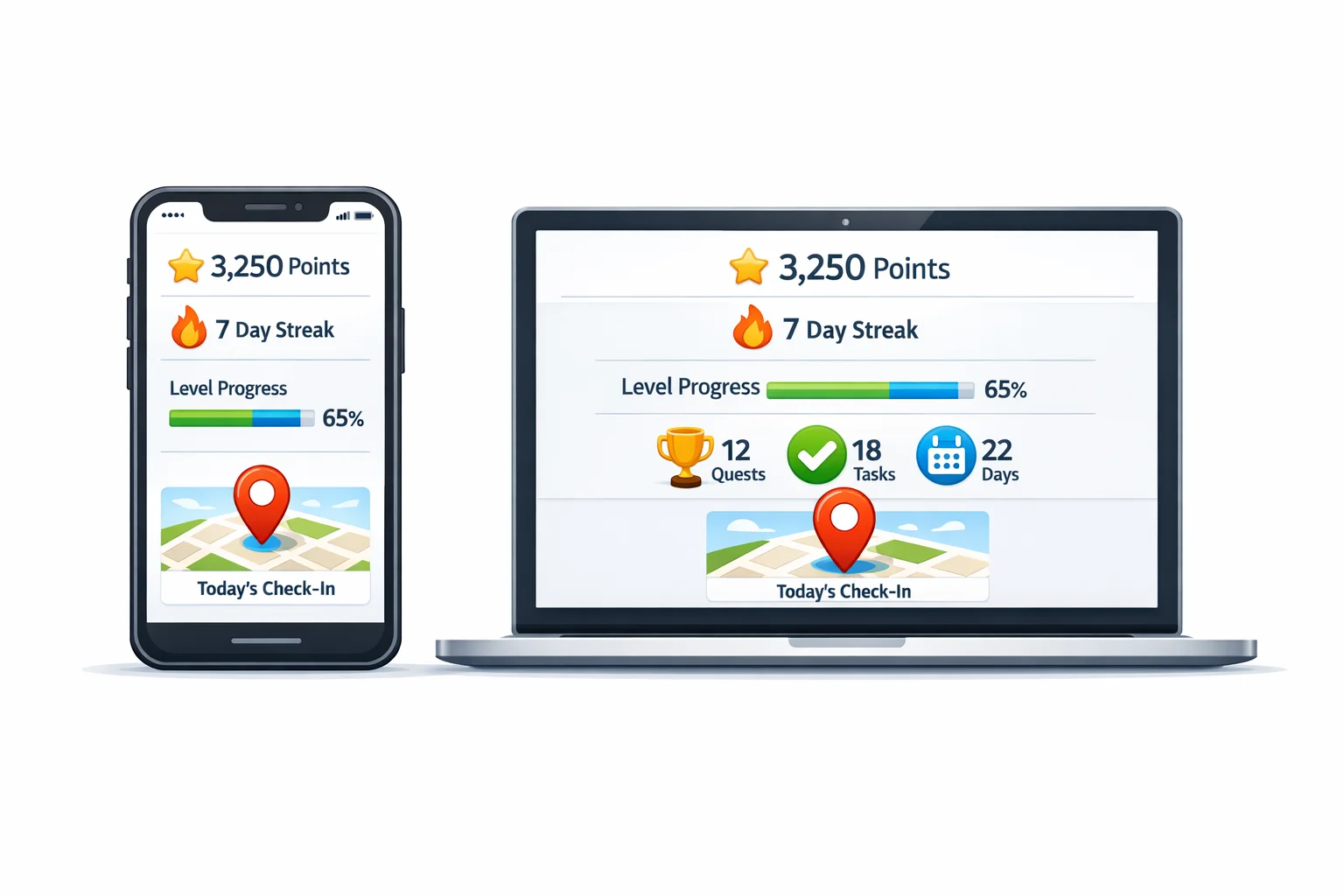

A quick illustration: challenge variety done right

When an engine supports mixed challenge types, you can engage different motivations and contexts. Five examples we’ve seen work in team-building and orientation:

- [Photo | 40 pts]: Capture a moment that shows “our culture in one frame.”

- [Video | 70 pts]: In under 10 seconds, teach a new hire tip you wish you had.

- [GPS Check-in | 50 pts]: Check in at the space where big decisions get made.

- [QR Code | 30 pts]: Find the artifact that started it all.

- [Q&A | 40 pts]: What surprised you most during onboarding today, and why?

Engines that make this mix simple to configure and measure usually deliver broader, longer-lasting participation.

Architecture traits that separate solid engines from fragile ones

An engine is, at heart, an event-driven system. The invisible parts matter.

- Event pipeline. Ingest events reliably, deduplicate, and evaluate rules quickly. Idempotency on mutating endpoints prevents double-awards when networks misbehave. Practical approaches mirror Stripe’s idempotency patterns. (stripe.com)

- Webhook guarantees. Expect at-least-once delivery, exponential backoff, signatures, and replay protections. Your handler must be idempotent because retries are normal. Stripe’s documentation sets a sensible baseline for retry behavior and verification. (docs.stripe.com)

- State model. Keep participant, progression, and economy states discrete. Support atomic updates and audit trails to unwind mistakes cleanly.

- Scalability and latency. Aim for low-latency rule evaluation and write-heavy workloads. Caches and queues are fine, but design for eventual consistency where it does not harm fairness.

- Multi-tenancy and isolation. If you serve multiple brands or business units, you want namespace isolation, per-tenant configs, and throttles.

- Observability. Emit structured events, traces, and metrics. Count every award, revoke, and redemption.

If the vendor can’t describe their retry schedule, idempotency strategy, and data model in plain terms, prepare for unwanted surprises under load.

Security, privacy, and compliance requirements you should insist on

- SOC 2 alignment. Look for vendors who can map controls to the Trust Services Criteria across security, availability, processing integrity, confidentiality, and privacy. Reference material on the framework is published by AICPA. (aicpa-cima.com)

- ISO 27001 posture. A recognized information security management standard helps ensure discipline around risk, access, and change. An IBM overview is a clear primer if you need an exec-friendly explainer. (ibm.com)

- GDPR principles. If you touch EU residents, design for data minimization, purpose limitation, and rights handling. The European Commission’s overview is the authoritative source. (commission.europa.eu)

- California privacy. Plan for CPRA-enhanced CCPA rights if you operate in the US. The California Privacy Protection Agency and Attorney General publish official guidance. (privacy.ca.gov)

- Badge portability. If you issue badges or credentials, support the 1EdTech Open Badges standard so achievements are verifiable and portable. Version 3.0 aligns with modern verifiable credentials. (1edtech.org)

None of these frameworks are optional once you scale. Engines that already speak this language will save you months of back-and-forth with security and legal.

Build vs. buy vs. embed: a practical decision framework

We’ve watched teams burn a year building core mechanics, then another year hardening webhooks, cleaning data models, and adding the admin features stakeholders expected on day one. Sometimes that investment makes sense. Often it doesn’t.

Use this lens:

- Build if gamification is central to your differentiation, you have durable product and platform teams, and you can maintain it for years without starving other priorities.

- Buy if you need a proven system with admin tools, analytics, and compliance posture out of the box. Keep the architecture open so you can export data and extend rules when needed.

- Embed if your product needs deep in-app mechanics but you want to ship fast using a developer-first API or PaaS, while keeping UI and brand native.

A seasoned reference for evaluating third-party solutions vs. building is Thoughtworks’ strategic framework. It frames the tradeoffs beyond sticker price to include time-to-value, integration depth, and future flexibility. (thoughtworks.com)

Where Scavify fits naturally: if your use case is challenge-based engagement for events, onboarding, campuses, or tourism, an app-based system with automation and flexible challenge types lets you launch in days, learn quickly, and scale. If your use case is embedded, always-on product mechanics with heavy customization, evaluate API-first providers alongside build options.

Implementation blueprint: from pilot to scale without the drag

A straightforward path that keeps momentum and avoids big-bang risk:

1) Define the target behaviors. List the 3 to 5 actions that matter and why. Tie each to an outcome metric you already track.

2) Map mechanics to motivations. Offer choice for autonomy, visible progress for competence, and team play for relatedness. SDT-aligned mechanics tend to sustain interest. (pmc.ncbi.nlm.nih.gov)

3) Instrument the loop. Emit events for attempts, successes, fails, and abandons. Tag by cohort.

4) Start small, ship weekly. Launch with a narrow set of challenges and a single leaderboard. Expand mechanics once you have signal.

5) Experiment on purpose. Split traffic, set guardrails, and treat novelty boosts with skepticism. The “rules of thumb” from large-scale experimentation cultures are worth internalizing. (researchgate.net)

6) Watch fairness and fatigue. Add caps, decay, and catch-up mechanics. Rotate challenges to avoid saturation.

7) Plan for abuse early. Use server-side validation for scores, require signed payloads, and design idempotent handlers so webhook retries do not duplicate awards. Stripe’s docs show sane patterns you can adapt. (docs.stripe.com)

8) Close the loop with meaning. Timely, relevant feedback beats generic confetti. Tie rewards to identity and team outcomes, not just trinkets.

Common mistakes that quietly tank engagement

- Points everywhere. Granting points for every click numbs the signal. Save points for meaningful actions and outcomes.

- One giant leaderboard. Without segmentation, top performers lap the field and newcomers check out.

- Static challenges. Monthly refreshes are the floor. Weekly micro-iterations keep energy alive.

- Unbounded reward economies. Inflation kills perceived value. Set budgets, sinks, and soft caps.

- Black-box analytics. If you can’t export raw events, you can’t answer real questions.

- Security as an afterthought. Retrofitting SOC 2 controls or GDPR rights handling is expensive. Start with a vendor and model that supports it.

A fast RFP checklist you can actually use

- Rules & mechanics. Real-time evaluation, streaks, cooldowns, prerequisites, quest chains, multi-currency, segmentation.

- Leaderboards & fairness. Server-validated scores, time windows, decay, fraud detection, audit logs. (heroiclabs.com)

- Experimentation. Native A/B or clean integration with your platform, exposure logging, guardrails. (microsoft.com)

- APIs & webhooks. Idempotent writes, signed webhooks, retries with exponential backoff, replay protection. (stripe.com)

- Data & analytics. Event export, warehouse feeds, cohorting, funnels, LTV proxies.

- Security & privacy. SOC 2 alignment, ISO 27001 posture, GDPR and CPRA/CCPA readiness. (aicpa-cima.com)

- Credentials. Open Badges 2.1/3.0 support if you issue verifiable achievements. (1edtech.org)

- Admin UX. Roles, staging, preview, rollback, moderation tools.

- Support model. Clear SLAs, roadmap transparency, and a way to request rules without custom code.

FAQs

What is a gamification engine in simple terms?

A service that listens for user actions, applies rules, updates a participant’s state, and returns immediate feedback like points, badges, or messages. It centralizes behavior logic so you can iterate quickly without hardcoding everything in your app.

Which gamification features typically drive sustained engagement?

Mechanics that support autonomy, competence, and relatedness, paired with timely, contingent feedback. Translation: offer choice, visible progress, and social connection, then respond immediately when people act. Research grounded in Self-Determination Theory and reinforcement shows these patterns hold up. (pmc.ncbi.nlm.nih.gov)

How do I prevent cheating on leaderboards?

Use server-authoritative writes so clients cannot submit final scores directly, sign and verify payloads, and keep handlers idempotent to survive retries. Time-bounded boards with decay and auditing further reduce abuse. (heroiclabs.com)

Do I need SOC 2 or ISO 27001 for a gamification engine?

If you handle personal data at scale or sell to enterprises, expect security reviews that map to SOC 2 controls and often an ISO 27001 posture. Engines aligned to these frameworks shorten procurement and reduce risk. (aicpa-cima.com)

What’s the difference between buying an app-based solution and embedding an API?

App-based platforms ship with ready-made challenges, admin tools, and participant apps. API-first platforms let you embed mechanics inside your product with your own UI. The right choice depends on whether you need speed to launch or deep in-product control.

How should I measure success?

Tie mechanics to core KPIs: activation, completion, repeat behavior, attendance, or training outcomes. Run controlled experiments with guardrails so you can attribute effects. Microsoft’s published work on trustworthy experimentation is a good north star. (microsoft.com)

Are badges worth issuing?

Yes, when they signal meaningful mastery and travel with the participant. Use the Open Badges standard so credentials are verifiable and portable across systems. (1edtech.org)

When is building your own engine the right call?

When mechanics are core to your differentiation, you can staff a platform team long-term, and your roadmap demands capabilities not available in mature products. Otherwise, buy or embed and focus your engineers where it matters most. Thoughtworks’ framework can help structure that call. (thoughtworks.com)

If you’re designing challenge-based engagement for team building, onboarding, campuses, or tourism and want a fast, measurable start, Scavify’s challenge variety, automation, and browser-plus-app flexibility are built for exactly that kind of work. If you’re embedding deeper product mechanics, use the criteria above to evaluate API platforms or a build path, then iterate weekly until the participation curve stops sagging.

Get Started with Gamification

Scavify is the world's most interactive and trusted gamification app and platform. Contact us today for a demo, free trial, and pricing.